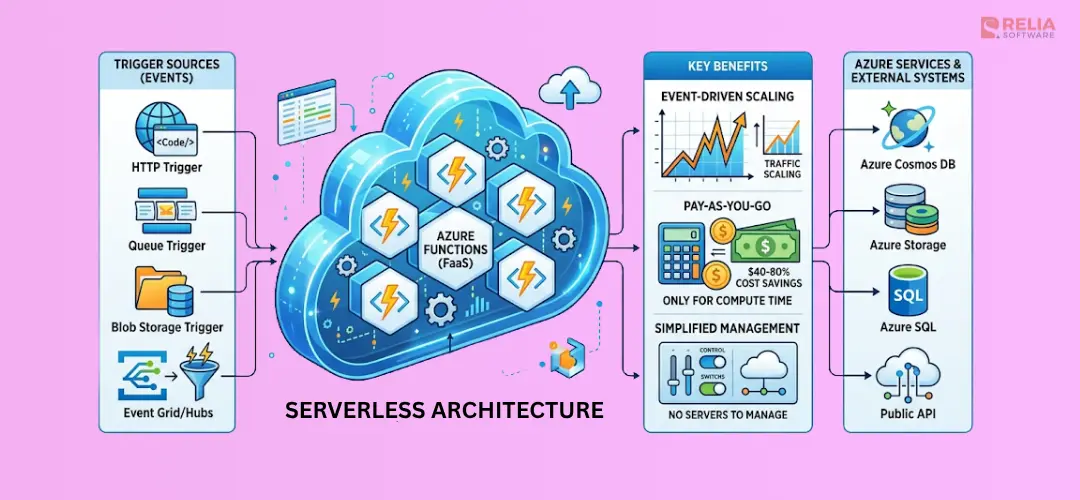

Azure Functions serverless architecture is a cloud approach for building APIs, automations, and event-driven systems without managing servers.

You deploy a container to handle 50 requests per minute. At 3 AM it handles zero, but you're still paying $73/month for idle compute. Azure Functions flips that model: zero traffic, zero cost. And when a flash sale hits 10,000 requests per second, it scales automatically without a single config change.

That's the promise of Azure Functions serverless architecture, and this guide shows you exactly how to deliver on it. You'll learn hosting plans, architecture patterns, Durable Functions workflows, cold start fixes, security, monitoring, and cost optimization.

|

KEY TAKEAWAYS:

|

What Is Azure Functions Serverless Architecture?

Azure Functions serverless architecture is a cloud model for building and running applications on Azure without provisioning or managing servers.

Developers create functions that run only when triggered by events such as HTTP requests, queue messages, timers, or blob uploads. Azure handles infrastructure, scaling, and runtime management automatically, while pricing is based mainly on actual execution and resource use instead of idle compute.

In a traditional deployment, you provision VMs or containers that run continuously, billing you whether or not traffic arrives. In the serverless execution model:

- An event (HTTP request, queue message, timer, blob upload) triggers your function.

- Azure allocates a compute instance from a pre-warmed pool.

- Your function code executes, returning a result or producing a side effect.

- The instance is returned to the pool (or deallocated) after execution.

You are billed for the actual compute time consumed, not idle time. A 2021 Microsoft Research study found that, when provisioned with the same amount of resources, serverless platforms hosted on Harvest VMs were 45% to 89% cheaper than regular VMs.

For Azure Functions, it does not work in isolation. In many real projects, it works with other Azure services such as Logic Apps, Event Grid, and Service Bus. Azure Functions remains the core compute layer, while these services help handle workflows, event routing, and messaging.

- Azure Functions: Event-driven code execution; the focus of this guide.

- Azure Logic Apps: Low-code workflow automation with 400+ connectors.

- Azure Event Grid: Fully managed event routing at massive scale, supporting push-based reactive architectures.

- Azure Service Bus: Enterprise-grade message broker for reliable async communication between services.

These four services compose the foundation of most azure serverless architecture patterns discussed below.

Key Features of Azure Functions

Azure Functions is Microsoft's Function-as-a-Service (FaaS) platform. It supports Node.js, Python, .NET, Java, PowerShell, and custom handlers, giving teams flexibility in language choice without sacrificing the managed infrastructure model.

Triggers, Bindings, and the Execution Model

Every Azure Function has exactly one trigger, the event source that causes it to execute. Azure Functions also supports input and output bindings, which are declarative connections to other Azure services that eliminate boilerplate data-access code.

|

Component |

Role |

Example |

|

Trigger |

Starts execution |

HTTP request, queue message, timer |

|

Input binding |

Read data without SDK cod |

Read a blob, fetch a Cosmos DB document |

|

Output binding |

Write data without SDK code |

Write to a queue, insert to Table Storage |

This binding model reduces boilerplate. An HTTP trigger function that reads from Cosmos DB and writes to a Service Bus queue can have zero SDK imports, Azure's host injects data directly.

Pro Tip: Bindings are defined in function.json (in-process model) or as attributes/decorators in the isolated worker model. The isolated worker model is now recommended for all .NET apps as it decouples your process from the Functions host runtime.

Hosting Plans Compared

Choosing the right hosting plan is one of the most consequential azure functions best practices decisions. Each plan makes different tradeoffs on cost, cold start behavior, and scaling.

|

Plan |

Scaling |

Cold Start |

Cost Model |

Best For |

|

Consumption |

Auto (0 → ∞) |

Yes (variable) |

Per-execution + GB-s |

Dev/test, low-volume APIs, event processors |

|

Flex Consumption |

Auto with pre-provisioned instances |

Minimized |

Per-execution + optional always-ready baseline |

Production APIs needing cold start control (recommended 2026) |

|

Premium |

Auto (always warm instances) |

None |

Per-vCPU/memory-hour (min 1 instance) |

High-throughput APIs, VNet-required workloads |

|

Dedicated (App Service) |

Manual or auto (App Service rules) |

None |

Per-VM-hour |

Predictable load, shared App Service plan, long-running |

The azure functions flex consumption plan is the 2026 recommended default for production workloads. It combines the per-execution pricing of the Consumption plan with the ability to configure a minimum number of always-ready instances, eliminating cold starts on critical paths without paying for a full Premium plan's minimum reserved capacity.

The azure functions consumption plan remains ideal for background jobs, event processors, and workloads with unpredictable or low traffic where occasional cold starts are acceptable.

The azure functions premium plan vs consumption plan decision often comes down to three factors: cold start tolerance, VNet integration requirements, and sustained throughput needs. Premium costs more at rest but provides predictable latency and supports private networking.

Serverless Architecture Patterns

Azure Functions enables several powerful architectural patterns. Understanding these patterns is essential for designing robust serverless microservices azure solutions.

Event-Driven Architecture with Event Grid

Event Grid is Azure's native event routing backbone. Azure services publish events (blob created, resource group updated, custom app event). Event Grid fans them out to subscribers, including Azure Functions.

A typical flow:

- New product image uploaded to Blob Storage

- Event Grid fires a

BlobCreatedevent - Azure Function resizes the image

- Thumbnails written back to storage

- The function only runs when uploads occur. Zero uploads = zero cost.

This azure functions event-driven microservices pattern is ideal for decoupling services, audit logging, multi-consumer event broadcasting, and reactive data pipelines.

Serverless Microservices with Service Bus

For guaranteed delivery, ordering, and dead-lettering, Service Bus is the right broker. Each microservice publishes domain events to a topic. Subscriber functions consume from dedicated subscriptions.

Benefits:

- At-least-once delivery: messages survive function failures.

- Competing consumers: multiple function instances process messages in parallel.

- Dead-letter queues: failed messages are captured for inspection and replay.

This pattern forms the backbone of resilient serverless microservices azure systems where services are independently deployable and failures don't cascade.

API Backend Pattern (HTTP Trigger + API Management)

The most common pattern: HTTP-triggered Azure Functions sit behind Azure API Management (APIM). APIM handles rate limiting, auth, caching, and the developer portal. Functions handle business logic.

The separation is clean. Functions are stateless compute units. APIM is the public contract. Swap implementations, version APIs, and apply policies without changing downstream consumers.

Durable Functions: Stateful Serverless Workflows

Durable Functions extends Azure Functions with stateful, reliable workflow orchestration solving the hardest problems in distributed systems without managing state machines yourself.

Three function types compose Durable workflows:

- Orchestrator function: Defines the workflow as deterministic C#/JS/Python code. Survives restarts; Azure replays history to restore state.

- Activity function: A single unit of work (call an API, write to a database). Can run in parallel.

- Entity function: Manages small pieces of persistent state with explicit operations (increment a counter, update a record).

Key azure durable functions stateful workflow patterns:

|

Pattern |

Use Case |

How It Works |

|

Function Chaining |

Sequential pipeline (A → B → C) |

Each activity's output feeds the next |

|

Fan-out/Fan-in |

Parallel processing with aggregation |

Orchestrator spawns N activities in parallel, awaits all |

|

Async HTTP API |

Long-running operation with status polling |

Client polls a status endpoint; orchestrator updates state |

|

Human Interaction / Approval |

Require human approval before proceeding |

Orchestrator waits for an external event (approval webhook) |

|

Monitor |

Polling until a condition is met |

Orchestrator loops with custom intervals |

Here's a fan-out/fan-in orchestrator in action:

const df = require("durable-functions");

df.app.orchestration("processOrderOrchestrator", function* (context) {

const orderId = context.df.getInput();

// Fan-out: run 3 activities in parallel

const tasks = [];

tasks.push(context.df.callActivity("reserveInventory", orderId));

tasks.push(context.df.callActivity("chargePayment", orderId));

tasks.push(context.df.callActivity("sendConfirmation", orderId));

// Fan-in: wait for all to complete

const results = yield context.df.Task.all(tasks);

return { orderId, steps: results };

});Pro Tip: Durable Functions uses Azure Storage (queues + tables) or the newer Netherite backend (Event Hubs + FASTER) for state. For high-throughput orchestrations, switch to Netherite to cut latency and storage costs.

Benefits of Azure Serverless Architecture

Cost Efficiency (Pay-Per-Execution)

The Consumption plan's pricing model is straightforward: you pay for execution count (first 1 million executions/month free) and GB-seconds of resource consumption. A function executing 10 million times per month at 200ms average duration and 128MB memory costs roughly $2–4 USD. The equivalent always-on VM costs $30–100+ per month.

For bursty, event-driven, or low-frequency workloads, this is a dramatic cost reduction.

Auto-Scaling Without Infrastructure Management

Azure Functions scales from zero to thousands of instances automatically. No autoscaling rules. No minimum/maximum instance counts on Consumption. No reserve capacity to provision. The platform observes incoming event rates and spins up compute in seconds.

This elasticity shines in three scenarios:

- E-commerce flash sales: traffic spikes 100x in minutes

- Overnight batch processing: scale up, process, scale to zero

- Viral content: unpredictable load handled without intervention

Developer Productivity and Faster Time to Market

With infrastructure management eliminated, developers focus entirely on business logic. Key productivity gains:

- Local development with Azure Functions Core Tools mirrors the cloud runtime.

- Bindings eliminate SDK boilerplate for common integrations.

- Managed dependencies handle runtime updates, security patches, and scaling logic.

- Teams report 30–50% faster deployment cycles compared to container-based microservices for equivalent functionality.

Built-in Enterprise Integration

Azure Functions integrates natively with 200+ Azure and third-party services via triggers and bindings: Cosmos DB, Event Hubs, Blob Storage, SQL, SignalR, SendGrid, Twilio, and more. For Microsoft-ecosystem teams (.NET, Azure AD, Power Platform), this integration depth is a key differentiator over competing FaaS platforms.

Azure Functions Best Practices

Performance Optimization

Connection pooling is critical. Functions instances are short-lived. But HTTP clients, database connections, and SDK clients should be initialized once at the module level. Reuse them across invocations, never create them inside the function body.

// BAD: creates new client on every invocation

module.exports = async function (context, req) {

const client = new CosmosClient(process.env.COSMOS_CONNECTION);

// ...

};

// GOOD: client initialized once, reused across invocations

const { CosmosClient } = require("@azure/cosmos");

const client = new CosmosClient(process.env.COSMOS_CONNECTION);

module.exports = async function (context, req) {

const container = client.database("mydb").container("items");

// ...

};Additional performance guidelines:

- Keep functions single-purpose: one trigger, one responsibility.

- Use async/await throughout: never block the event loop with synchronous I/O.

- Set appropriate timeouts default is 5 minutes on Consumption; set the minimum your logic requires.

- Minimize cold dependency loading: lazy-load large modules only when needed.

Solving the Cold Start Problem

Azure functions cold start happens when a new instance must load the runtime, your code, and dependencies before executing. On Consumption plan, expect 200ms–2s depending on language and bundle size.

Strategies to minimize cold starts:

- Flex Consumption plan: Configure always-ready instances for critical paths; pay only for what you provision, not idle time on unused instances.

- Premium plan: Keeps a configurable number of instances pre-warmed at all times.

- Reduce bundle size: Tree-shake dependencies; use

node_modulespruning for Node.js apps. - Prefer .NET isolated or Java: These runtimes have faster cold start characteristics than interpreted languages for large apps.

- Pre-warming via timer trigger: A low-frequency timer trigger on Consumption can reduce instance recycling, though the Flex plan is a cleaner solution.

Pro Tip: For how to avoid cold starts in azure functions on a budget, the Flex Consumption plan's always-ready instance feature is the most cost-effective option in 2026. You pay a per-second rate for always-ready instances but avoid the Premium plan's minimum hourly charge.

Security

- Managed Identity: Never store connection strings or API keys in environment variables. Use system-assigned or user-assigned managed identities to authenticate to Key Vault, Storage, Cosmos DB, and other Azure services without credentials.

- Azure Key Vault: Store all secrets in Key Vault; reference them in app settings using the Key Vault reference syntax:

@Microsoft.KeyVault(SecretUri=...). - VNet Integration: Premium and Dedicated plans support VNet integration, allowing functions to reach private endpoints for databases and internal services.

- Function-level auth: Use

AuthorizationLevel.FunctionorAuthorizationLevel.Adminfor HTTP triggers in production; never useAnonymousfor sensitive endpoints without APIM or custom auth middleware.

CI/CD and Infrastructure as Code

Define your function infrastructure in Bicep or Terraform to ensure reproducible deployments:

resource functionApp 'Microsoft.Web/sites@2023-01-01' = {

name: functionAppName

location: location

kind: 'functionapp'

identity: {

type: 'SystemAssigned'

}

properties: {

serverFarmId: hostingPlan.id

siteConfig: {

appSettings: [

{ name: 'FUNCTIONS_EXTENSION_VERSION', value: '~4' }

{ name: 'FUNCTIONS_WORKER_RUNTIME', value: 'node' }

{ name: 'AzureWebJobsStorage__accountName', value: storageAccount.name }

]

}

Use GitHub Actions or Azure Pipelines with the azure/functions-action to deploy on every merge to main. Enable deployment slots on Premium/Dedicated plans for zero-downtime blue/green deployments.

Real-World Use Cases for Azure Functions

IoT Data Processing Pipeline

An IoT solution ingests millions of telemetry events per day. Azure IoT Hub routes device messages to an Event Hub. An Event Hub-triggered Azure Function processes each batch: validates the payload, enriches with device metadata from Cosmos DB (via input binding), and writes processed records to a time-series store.

At peak load, Functions scales to hundreds of instances automatically; at night, it scales to zero, costing nothing.

E-Commerce Order Processing

When a customer places an order, a Durable Functions orchestration kicks off: activity functions run in parallel to reserve inventory, charge the payment method, and send a confirmation email.

A human-approval activity pauses the workflow for high-value orders flagged for fraud review. The fan-out/fan-in pattern completes all parallel steps before proceeding to fulfillment. This azure durable functions stateful workflow replaces complex state machine code with readable orchestrator logic.

Real-Time File Processing

A legal firm uploads contracts (PDFs) to Blob Storage. A Blob trigger fires an Azure Function for each upload, invoking Azure AI Document Intelligence to extract key clauses, writing structured results to Cosmos DB, and notifying reviewers via a Service Bus queue. The entire pipeline is serverless, no containers, no orchestration platform, no idle cost.

Scheduled Data Synchronization

A SaaS platform syncs customer data from a third-party CRM every 15 minutes using a Timer trigger. The function fetches delta records from the CRM API, upserts to an internal database, and publishes change events to Event Grid for downstream consumers. Execution takes 30–90 seconds; the Consumption plan costs pennies per day.

Chatbot / AI Integration Backend

An enterprise chatbot routes user messages to an HTTP-triggered Azure Function that calls Azure OpenAI with a retrieval-augmented generation (RAG) prompt, grounding responses in internal knowledge base documents retrieved from Azure AI Search. Responses stream back via Server-Sent Events. Functions scales from 0 to 500 concurrent users without configuration changes.

Azure Functions vs AWS Lambda vs Google Cloud Functions

| Feature | Azure Functions | AWS Lambda | Google Cloud Functions |

|---|---|---|---|

| Languages | .NET, Node, Python, Java, PowerShell, custom | Node, Python, Java, Go, Ruby, custom | Node, Python, Java, Go, Ruby, .NET, PHP |

| Max execution time | 230 seconds (Consumption), unlimited (Premium/Dedicated) | 15 minutes | 60 minutes (2nd gen) |

| Pricing model | Per-execution + GB-s; Flex/Premium for always-warm | Per-execution + GB-s; Provisioned Concurrency for warm | Per-execution + GHz-s |

| Ecosystem integration | Deep Microsoft/Azure integration (AD, DevOps, M365) | Deep AWS integration (IAM, CloudWatch, 200+ triggers) | Deep GCP integration (Pub/Sub, BigQuery, Firebase) |

| Cold start | Variable; Flex Consumption minimizes | Variable; Provisioned Concurrency eliminates | Variable; min instances available |

| Stateful workflows | Durable Functions (native) | Step Functions (separate service) | Workflows (separate service) |

| VNet support | Yes (Premium/Dedicated/Flex) | Yes (VPC Lambda) | Yes (VPC connector) |

>> Read more: How to Build A Serverless React App with AWS Lambda and Vercel?

Getting Started: Build Your First Serverless API

Prerequisites:

# Install Azure Functions Core Tools v4

npm install -g azure-functions-core-tools@4 --unsafe-perm true

# Install Azure CLI

brew install azure-cli # macOS

# or: winget install Microsoft.AzureCLI

# Login

az loginStep 1: Create a New Function App Locally

# Create project directory

func init my-serverless-api --worker-runtime node --language javascript

cd my-serverless-api

# Add an HTTP trigger function

func new --name GetProducts --template "HTTP trigger" --authlevel anonymousStep 2: Write Your Function

Edit src/functions/GetProducts.js:

const { app } = require("@azure/functions");

// Simulated product data (replace with Cosmos DB binding in production)

const products = [

{ id: "1", name: "Widget Pro", price: 29.99, stock: 150 },

{ id: "2", name: "Gadget Plus", price: 49.99, stock: 75 },

];

app.http("GetProducts", {

methods: ["GET"],

authLevel: "anonymous",

route: "products",

handler: async (request, context) => {

context.log("GetProducts function triggered");

const category = request.query.get("category");

const filtered = category

? products.filter((p) => p.category === category)

: products;

return {

status: 200,

headers: { "Content-Type": "application/json" },

body: JSON.stringify({ products: filtered, count: filtered.length }),

};

},

});Step 3: Test Locally

func start

# Outputs: GetProducts: [GET] http://localhost:7071/api/products

curl http://localhost:7071/api/products

# {"products":[...],"count":2}Step 4: Deploy to Azure

# Create resource group

az group create --name rg-serverless-api --location eastus

# Create storage account (required for Functions)

az storage account create \

--name stserverlessapi$RANDOM \

--resource-group rg-serverless-api \

--sku Standard_LRS

# Create Function App (Flex Consumption - recommended)

az functionapp create \

--resource-group rg-serverless-api \

--name my-serverless-api-$RANDOM \

--storage-account <storage-name> \

--flexconsumption-location eastus \

--runtime node \

--runtime-version 20

# Deploy your code

func azure functionapp publish <function-app-name>Your API is now live at https://<function-app-name>.azurewebsites.net/api/products.

Monitoring and Troubleshooting

Application Insights Integration

Every production Azure Functions app needs Application Insights enabled. It gives you distributed tracing, dependency tracking, live metrics, failure analysis, and custom telemetry with zero code changes for built-in instrumentation.

Enable it during deployment:

az monitor app-insights component create \

--app my-functions-insights \

--resource-group rg-serverless-api \

--location eastus

az functionapp config appsettings set \

--name <function-app-name> \

--resource-group rg-serverless-api \

--settings APPINSIGHTS_INSTRUMENTATIONKEY=<key>| Metric | What It Tells You | Alert Threshold |

|---|---|---|

requests/failed | Function execution failures | > 1% error rate |

performanceCounters/exceptionsPerSecond | Unhandled exceptions | Any spike |

customMetrics/Function Execution Count | Invocation volume | Baseline deviation |

dependencies/duration | Downstream service latency | > p99 SLA threshold |

performanceCounters/requestExecutionTime | Function duration | > timeout - 20% |

Common Issues and Fixes

| Issue | Cause | Fix |

|---|---|---|

| Cold start latency spikes | Consumption plan, large bundle | Switch to Flex Consumption with always-ready instances; tree-shake bundle |

| Function timeout errors | Long-running operations exceeding limit | Increase timeout in host.json; use Durable Functions for workflows > 5 min |

| Out of memory on Consumption | Memory-intensive processing | Upgrade to Premium or stream data instead of loading full datasets |

| Queue message reprocessing | Function failure + retry policy | Implement idempotency keys; configure max delivery count on Service Bus |

| Connection pool exhaustion | New clients created per invocation | Move client initialization outside function handler (module scope) |

| Missing logs in App Insights | Sampling configured too aggressively | Adjust samplingSettings in host.json; set excludedTypes appropriately |

Pro Tip: Use Application Insights Live Metrics stream during deployments and load tests to catch issues in real time before they affect users at scale.

Cost Optimization Tips

Right-Size Your Hosting Plan

Don't default to Premium if your workload doesn't need it. Use this decision tree:

- Traffic < 1M executions/month + cold starts acceptable → Consumption

- Traffic > 1M executions/month + cold starts matter → Flex Consumption with 1–2 always-ready instances

- VNet required + sustained high concurrency → Premium

- Predictable baseline load + existing App Service plan → Dedicated

Use Durable Functions for Long Workflows

Polling-based patterns, where a function loops and waits for a condition, consume compute throughout. Replace them with Durable Functions' Monitor pattern: the orchestrator sleeps between checks, consuming no compute during waits. For a workflow that polls every 60 seconds for up to 24 hours, this can reduce compute consumption by 99%.

Implement Proper Timeout and Retry Policies

Set the minimum timeout your functions require in host.json:

{

"version": "2.0",

"functionTimeout": "00:05:00",

"extensions": {

"queues": {

"maxPollingInterval": "00:00:02",

"visibilityTimeout": "00:00:30",

"maxDequeueCount": 5

}

}

}Short timeouts prevent runaway executions from accumulating cost. Proper maxDequeueCount prevents poison messages from being retried indefinitely.

Avoid Chatty Bindings

Batch processing is dramatically more cost-efficient than per-item processing. When using Event Hubs or Service Bus triggers, configure maxBatchSize to process multiple messages per invocation rather than triggering once per message.

Azure Cost Management Integration

Enable Azure Cost Management budgets and alerts for your function app's resource group. Set alerts at 50%, 80%, and 100% of your monthly budget. For Consumption plan apps, unexpected cost spikes usually indicate a bug (infinite retry loop, accidental high-frequency timer), alerts catch these before the bill arrives.

Pro Tip: Optimizing function performance also reduces cost, faster functions consume fewer GB-seconds. Profile with Application Insights to identify and fix slow code paths.

Conclusion

Azure Functions serverless architecture delivers a combination of developer productivity, operational simplicity, and cost efficiency for modern cloud applications. Key takeaways from this guide:

- Choose the right hosting plan: Flex Consumption is the 2026 recommended default for production workloads balancing cold start performance and cost.

- Use Durable Functions for any workflow that requires state, parallel execution, or human interaction, it's one of Azure's most powerful and underutilized features.

- Apply azure functions best practices from day one: module-level connection pooling, Managed Identity for secrets, Application Insights for observability.

- Azure Functions scales from side projects to enterprise systems without infrastructure changes.

Start building today. Provision your first function app on the Azure free tier, it includes 1 million free executions per month. Then explore Durable Functions to unlock stateful serverless patterns that solve real distributed systems challenges.

The serverless-first mindset isn't just an architectural preference, it's a competitive advantage. Every minute you spend managing servers is a minute you're not shipping features.

>>> Follow and Contact Relia Software for more information!

- coding

- development